Computers get smarter all the time.

From self-driving cars to machines that mimic anger to train call-center staff, advances have been made in artificial intelligence in just the last couple of years which were unimaginable not that long ago. Just a few days ago, scientists announced that they have developed a ‘memory cell’, 10,000 times thinner than a human hair, which replicates the processes of the human brain. The scientists in charge of the project said the device is a “vital step towards creating a bionic brain.”

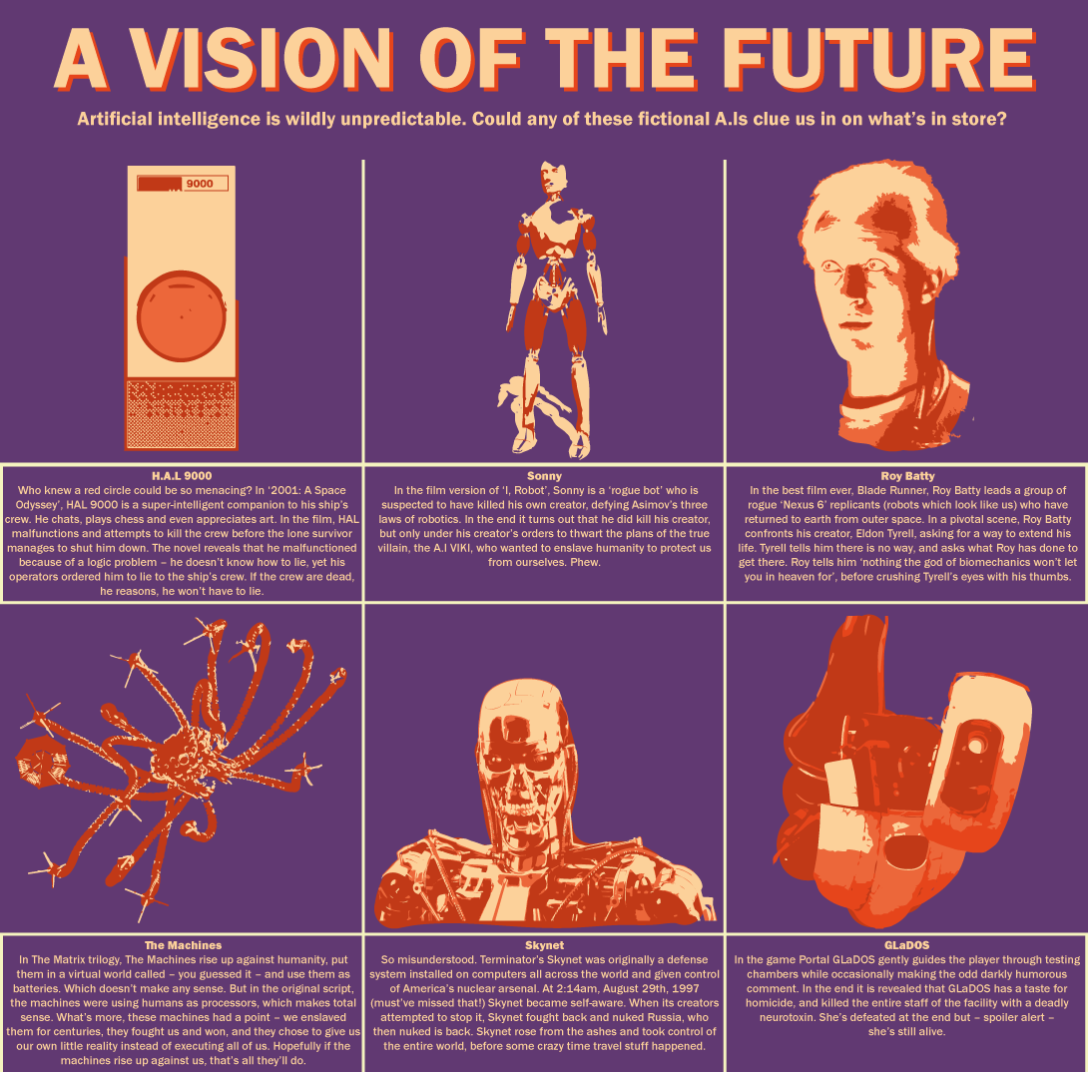

And as artificial intelligence grows, so do concerns about its capabilities and use. Prof. Stephen Hawking thinks “the development of full artificial intelligence could spell the end of the human race.” Tesla founder Elon Musk believes A.I is “our biggest existential threat.” Concerns range from self-replicating nanomachines that engulf the earth, to hyper-efficient killer robots, to Skynet-esque defense systems which decide humans are a lot of bother and the earth would look a lot better in chrome.

“I think the development of full artificial intelligence could spell the end of the human race.”

– Prof. Stephen Hawking

But these concerns mean little to those invested in this technology. Google, for instance, is rumoured to have purchased A.I company DeepMind Technologies for over $500 million. They have never officially confirmed this acquisition but the resources undoubtedly went towards the ‘Google Brain’, which is already taking dull, monotonous jobs from Google gremlins and revolutionising the capabilities of Google products like voice recognition. To keep a competitive edge companies like Google obviously keep these developments closely guarded, which begs the question: who is ensuring that Google, or anyone else, doesn’t accidentally create an A.I that is a threat?

But never mind accidentally creating dangerous A.I. There are those out there who are deliberately doing it. Lethal Autonomous Weapon Systems are a hot topic of debate right now. Though none exist that are 100% autonomous, yet, there have been machines that use a form of artificial intelligence, under human control, in warfare for years. Unmanned Aerial Vehicles (UAVs) have been used in the battlefield since the Bush administration. In Pakistan alone the U.S have enacted over 415 UAV or ‘drone’ strikes since 2004, with top estimates of people killed reaching almost 4,000. Top estimates of civilians killed have reached almost 1,000. As we get closer to fully autonomous systems with better A.I, the rhetoric is that A.I will allow LAWS to scan and attack specific targets with higher precision than even human soldiers, reducing civilian casualties.

“The ‘man in the loop’ might just be there to be blamed if the system is set up badly.”

– Dr. Anders Sandberg

But there’s a bigger problem that comes from this. When an AI kills a civilian, who is responsible? The designer? The engineer? The person monitoring the machine? Accountability is a huge problem with LAWS and that’s what’s at the center of the debate. This point wasn’t discussed much when the U.N held a debate in April to get the views of experts on LAWS. There, many member states simply said that they weren’t going to develop LAWS, such as France and the UK, yet Israel and the U.S left the door open to future research. Many, like the Campaign to Stop Killer Robots, believe that this isn’t enough, and that “the concept is not about finding or building a “better” or “safer” autonomous weapon system but about drawing the line to prohibit systems that do not come under human control.”

Dr. Anders Sandberg of Oxford’s Future of Humanity Institute believes that the time for intervention is now. “I think it would be a great idea to have a convention against LAWS,” he said. “we have a chance to stave this one off at the source. Of course, the devil is in the treaty details – there are missiles today that can be argued to be autonomous enough to qualify as LAWS.”

The accountability issue may be irrelevant in the long term, however, according to Sandberg: “It is commonly said that there will always be ‘a man in the loop’ – military responsibility requires it, and no doubt officers want to be officers rather than robot- and drone-herders.

“But in many cases this control is being attenuated by autonomous systems or helpful infrastructure: the ‘man in the loop’ might just be there to be blamed if the system is set up badly.”

However, futurist and engineer Nell Watson believes that LAWS do have a place in warfare.

“Lethal semi-autonomous killing machines have been a reality for some time, with drones and cruise missiles.” she said. “There has always been someone nominally controlling it however.”

“In the far future, robots could be very skilled interrogators, able to detect if someone is lying for example. In the mid-term, lethal autonomous systems will be deployed, but only in ‘hot’ situations where there are very few civilian actors in the surrounding environment.”

Whether you trust in the responsible use of LAWS or not, the potential that they will disobey is an even greater threat. And we don’t actually know much about that potential. Dr Sandberg says “there has been surprisingly little work done on how to make safe A.I.”

“A lot about making it capable, but very little about getting it to behave itself.” he adds.

“Even apparently reasonable goals are risky if implemented by a fast, powerful machine that doesn’t share our view of what is reasonable. Human values are complex and often unstated.”

“So there is a real risk that adaptive smart machines might do things we do not intend them to do, but in such a smart way that we don’t even notice the brewing disaster before it is too late.”

Nell Watson believes the answer to this problem lies in ‘teaching’ computers ethics in a way that they can understand.

“To ensure that this blending of human and machine intelligence is a healthy one,” Watson says, “we need to urgently find ways of specifying values and ethics in ways that machines can readily interpret.”

“I foresee the greatest problem not in amoral machines, but rather in ones that we have taught morality to, who can therefore perceive the great moral inconsistencies within human society. For example, they way that we treat some people differently, or apply violence to situations, or pet a dog yet eat a pig.”

“We can scarcely imagine how such a supermoral agent would view us, and judge our daily actions.”

“We need to urgently find ways of specifying values and ethics in ways that machines can readily interpret.”

– Nell Watson

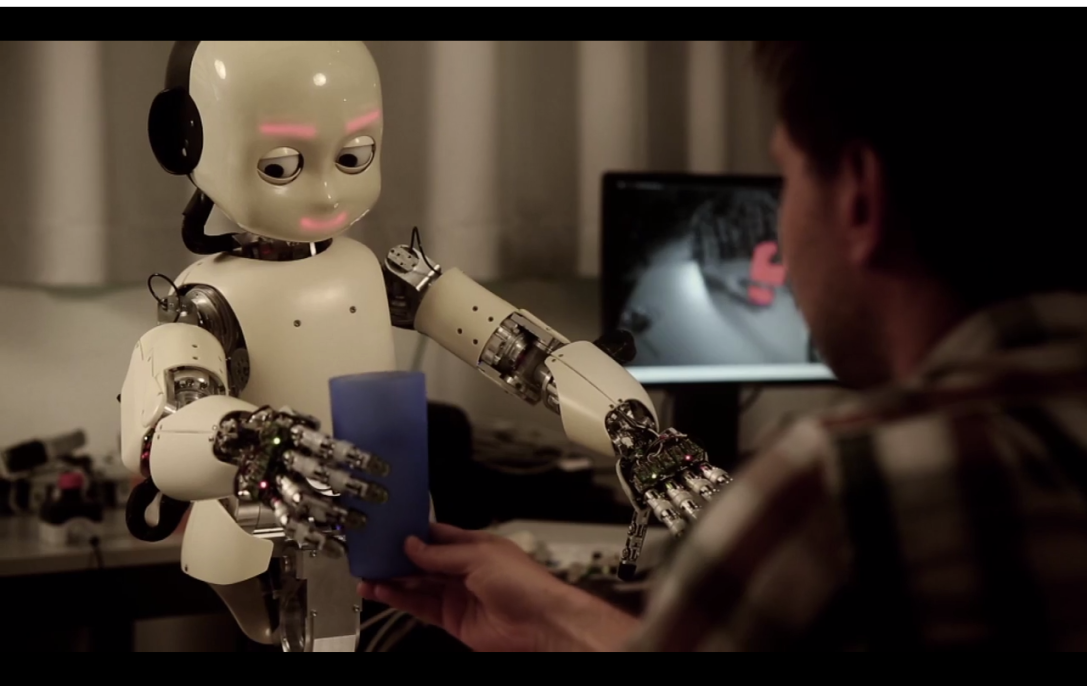

Perhaps many of the fears of artificial intelligence are rooted in that, the knowledge that we aren’t perfect. The fear of what a ‘perfect being’ would make of us. In any case, that is actually a long way off. The important thing now is that we set precedents for future generations, and deal with the challenges on the horizon. Within our lifetimes we could be looking at LAWS around the world, potentially changing the face of warfare. We could see self-driving cars, robot assistants that do manual tasks for us, and so much more, all within our lives. We need to deal with these challenges as they come and prepare for future ones, and that means more accountability, openness and monitoring around the world.

Do you think A.I could be a serious threat? Leave a comment below. For some food for thought, I’ll leave you with this, originally from Fredric Brown’s ‘Angels and Spaceships’:

The scientist flicked the switch. He turned to the machine and asked, “is there a god?”

The machine answered: “There is now.”